Go With The Flow: Churn-Tolerant Decentralized Training of Large Language Models

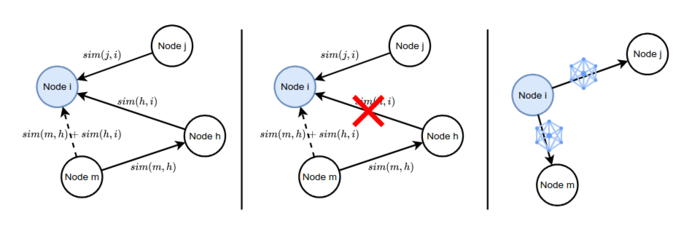

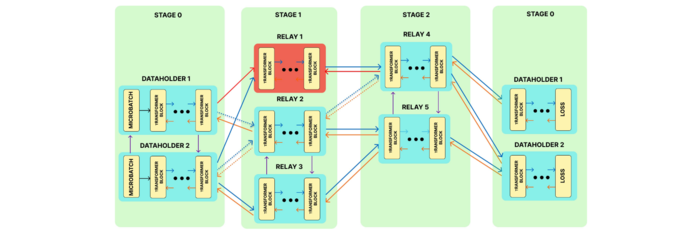

GWTF is the first practical, crash-tolerant decentralized framework for collaboratively training LLMs on heterogeneous volunteer clients. It handles node churn and unstable networks through a novel decentralized flow algorithm that optimizes microbatch routing. Evaluations on GPT- and LLaMa-like models show that GWTF reduces training time by up to 45% in challenging, geographically distributed settings.