Training Diffusion Models with Federated Learning

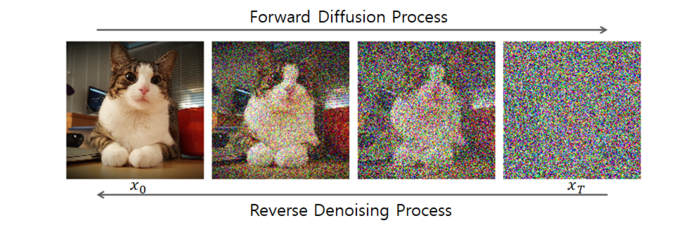

We introduce a federated diffusion framework that allows independent, privacy-preserving training of DDPMs without exposing local data. By adapting FedAvg and leveraging the UNet backbone efficiently, our method cuts parameter exchange by up to 74% compared to naive FedAvg, while preserving image quality close to centralized training, as measured by FID.