Parameterizing federated continual learning for reproducible research

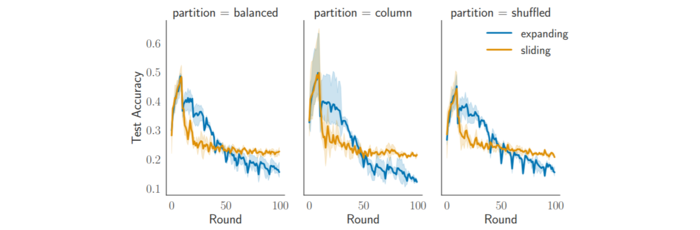

We present the first fully configurable framework for Federated Continual Learning, designed to reproduce complex, evolving learning scenarios. It supports large-scale deployments via containerization and Kubernetes, enabling precise experimentation. Demonstrations on CIFAR-100 and heterogeneous task sequences show Freddie’s effectiveness and uncover persistent performance challenges in real FCL settings.